Election years call for new and intrepid ideas, policies powered to rise above the rude hurly burly of we vs. they, consecrating their noble intentions to nothing less than the greater national good and putting the sectarians to rout. So here’s my idea: Let us tax all Americans in proportion to their Twitter following.

Fair, indubitably transparent, and toughened against all loopholes, the plan would finally redistribute the wealth of Bill Gates (8,505,521 followers) until we’d get to call it the Melinda Gates Foundation, expropriate the assets of Lady Gaga (follower count: an end-of-civilization-as-we-know-it 30,211,539), and gladden the meek and diffident Twitterers with their double-digited coteries, who’d be emboldened to slip the plain brown wrappers off their bankbooks with pride. And once implemented we’d never again have to pester Mitt Romney about his returns; we’d know. In short, economic justice at last.

But there’s more you can do with Twitter than just hammer another plank into some dreamer’s platform. There’s information sounding faintly through the white noise – at least I think there is, somewhere, somehow; and indeed, a thick catalogue of apps out there perform, or purport to perform, all manner of analysis on the billions of tweets riddling the cyber cosmos.

Now while I can hardly claim to have surveyed all of these (and you’d probably think ill of me had), but after some concerted fooling around with a number of candidates I’ve cast my vote – a provisional one, mind you – for www.twdocs.com, a free and acceptably easy, and remarkably speedy tool for downloading tweets by any key term into Excel. But the analysis writ large, requires about two-and-a-half-steps – that is, getting the data into your worksheet, and then deciding what you can learn from them. The half-step trudges between the other two – understanding what sort of data you’re about to get, and by extension, perhaps, what you’re not going to get from twdocs and Twitter in general.

Some Caveats

That is to say: the analysis of tweet traffic – the figuring of tweet (and retweet) frequencies by key term broken out by month, day, hour, minute, or perhaps even seconds, user stats, and perhaps even a bit of content analysis – is constrained in part by the number of tweets you can rightly hope to access. It appears Twitter sizes its tweet-download maximum at about the most recent 1500 (the number I’ve seen at numerous app sites), and twdocs appears to reach back to only the last week’s worth of tweets, an interval which may slim some outputs well beneath 1500, simply because the searched-for term hasn’t been invoked that often in the past seven-or-so days. Also, the results aren’t quite real-time, though they’re real close. In addition, while every tweet entry that boxes itself in your cells is time-stamped the entries need to be calibrated to the understanding that people are tweeting worldwide, so one tweeter’s 8:00am is another’s 1:00pm. (The data I download seem to lag precisely an hour behind my Greenwich time zone, which either bespeaks an hour delay or the fact that twdoc’s server is somewhere over the Atlantic. In fact and for what it’s worth, twdoc is Latvia-based; in any case you should check the data against your zone.) So, if among other things you’re interested in the rates of tweeting activity for a given topic, these data should stand you in good stead.

Keep in mind as well that you can download data for the same search item the next week, too, at which point you can stack this week’s output atop the earlier ones, and thus still accumulate a large, relatively current and continuous batch of tweets. As we hope to demonstrate, you probably want to search for terms that capture your intent as generically as possible. You can search for @obama, #obama, and Obama, but the last-named should excavate the largest set of entries, and it does. A short while prior to typing this sentence, I entered Obama (in search of a max of 1200 results) and came away with 1200 entries spanning 2 minutes and 56 seconds. Entering #obama just a bit later yielded its 1200 in 1:58:05, or nearly two hours. The twdocs engine seems impressively accurate and comprehensive.

Giving It A try

Getting twdocs to work seems pretty straightforward. After supplying the site with your Twitter identity and following the start instructions (most of which I no longer remember, truth to be told; you will in addition be asked to consent to twdoc’s access of your identity whenever you log into the site) you should be transported here:

My user experience advises me to first click the CSV (comma separated values) option (in actuality a less troublesome selection than XLS) and then to click the Latest SEARCH results for…link. You’ll be brought here:

(Note the results box, in which you can type a figure topping at 1500. Note in turn the varied format options, although again, for spreadsheet purposes I use CSV – it just happens to provide a cleaner alternative, and I’m presently bypassing the advanced options for a reason). You can basically type anything in the Query field, e.g., @obama, #obama, or just plain Obama. (You can apparently type John Doe without the modifying “ “.) Then click Go. Soon this bar should stretch itself out in the lower reaches of your screen:

Just click Open.

For illustration’s sake, I downloaded tweets subsuming the term bigdata, something of a hot topic these days (and not to be confused with bighair), and saved the results as an Excel file (diverting it from its default CSV format).Here’s the file:

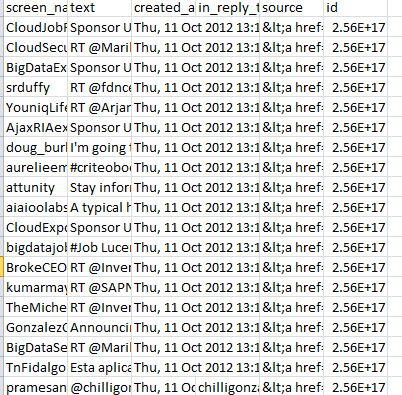

For starters, the data look something like this:

After retailoring the column widths, we need decide what to do next. At least two of the presented columns, in_reply_to and source, seem be of no informational consequence; the id field, currently formatted in Scientific notation mode, might however have a role to play in the analysis, as we hope to show. (If you want to see the ids in their standard numeric incarnation select the entire column and click Number in the drop-down menu heading the Home tab >Number button group. Never mind the decimal points.)

A Tried and Tested Tool

Now if you’re interested in rates – i.e., tweets per hour, etc. – you most assuredly will be interested in the created_at field in column C (needless to say, you can rename the columns). The data here amalgamate weekday, day of month, and time of tweet data, and as such need to be broken out. And the instrument towards this end is none other than the classic Text to Columns options, which dices information in a cell into sections, the widths of which are your call. When all is said and done we want to have assigned weekday, day-month, hour, and minute to discrete, independent columns, the better to pivot table them and the like.

Applying Text to Columns is usually (usually) trouble-free. To start, select the C column, and click the Data tab > Text to Columns in the Data Tools button group. You should see:

Note that Excel has typed the data as fixed width, the nature of which is explained alongside the term. In fact, every expression the C column happens to be 31 characters long (that is, fixed), and appears to be cleanly separable into columns wherever a space is interpolated as we’re told above. Click Next, and you’ll see

Again, Excel has drawn demarcating lines at each space, proposing to split each cell’s data into the respective columns recommended above. But we’ve been entrusted with some discretion in this matter, and what I want to do gather the 11, the Oct, and the 2012 into a single cell instead of the proposed three showing in the screen shot. In order to realize that efficiency I’m going to double-click the lines separating the 11 and Oct, and Oct and 2012, as per Excel’s instructions:

You’ll see why, I hope. Then click Next:

Now take note of what we want to see, and what we don’t want to see. My plan is to bead together 11-Oct-2012 in the first data row, for example, a mathematical date (as opposed to text) entry from which we can then ferret weekday, month, and year if we want to. If I’ve gotten that right, then we don’t need the leftmost Thu, entry, with that superfluous comma that would have to squelched, if only for formatting reasons. But again, if I’ve played my cards right, we’ll see that the day is already folded into 11-Oct-2012, as it were. In that light I can click the column header above Thu, and click the Do not import column (skip) button, and do the same for the column sporting the redundant and ultimately useless +0000 data:

Then click Finish. You should see:

Remember we’re about to perform an act of fission, parting the column on which we’re working into two serviceable columns – and Excel in turn won’t make room for the new field(s) by inserting a corresponding set of insurgent columns. Rather, Excel will simply overwrite any data in as many adjoining columns to the right of the data as are required by the new columns. But we’re fortunate in this case; we’re halving the current column into two – and so only one existing column will be overwritten – one of the columns we don’t need: in_reply_to. Click Finish. You should see:

Now date has been severed from time. (Note by the way the original column title – created_at – has also been sundered, but that’s not a big deal. Just retitle it, and also title the new column carrying the time data.)

We’re not finished, because we still need to see day-of-week, hour, and minute fields in order to empower our analysis (I’m not providing seconds as a unit of time though the data are available; but operating at that level of precision will start to get messy here). In the E column, the one containing the extraneous “source” data, type in E2

=WEEKDAY(C2)

You should return the value 5, or Thursday. Copy that formula down the column and retitle it Day or something like it. In the vacant G column type in G2

=HOUR(D2)

And copy down the column (and title the column, too). I get 13 in G2, as I’m operating with European time settings.

And in H2 type

=MINUTE(D2)

And copy down the column (it needs a header, too).

Your workbook should look something like this:

And if you haven’t been saving the workbook throughout, save it now!

Now the sheet is primed for analysis. Let’s see what we can do with it.

In the meantime, just remember – if you stop following Lady Gaga, you’re an accessory to tax evasion.

Leave a comment