A Bit of Streamlining

When we last exchanged business cards you’ll recall my introduction of the Text to Columns feature, a time-honored, relatively self-evident means for farming out data amassed into one cell into a set of usable columns:

But in the course of one my solidtudinous walks in the forest it occurred to me that the lines above could be drawn more sparingly:

Here the lines flank all the incipient date and time data, but this will work nevertheless; the resulting expression retains a numerical, as opposed to text, character, and as such remains friendly to the WEEKDAY, YEAR, HOUR, etc. class of time-culling functions.

Some Watchwords About the Data

By way of additional review I’ll remind you that these posts draw upon tweet downloads emanating from the www.twdocs.com site, and in the intervening week during which I’ve splashed around the data I’ve learned some additional, cautionary things which you need to know, but which can be reconciled pretty easily:

- A two-word search, e.g., Obama Romney, appears to proceed under the aegis of an OR operator. That is, you may turn up a tweet containing only one of the two words.

- For a small proportion of downloaded tweets, the searched-for term(s) may not appear at all. I’ve emailed twdocs about this curiosity, and as of this writing have yet to hear from them.

Of course these anomalies can compromise your takes on the data, but again, there’s a practicable way to deal with these loose ends. Say I’m requesting tweets containing Obama Romney. To ascertain that I’m getting what I want once the download materializes, I can click anywhere among the results and turn the Filter feature on (Data tab > Filter in the Sort & Filter button group). Click the down arrow atop the Text field and move into Text Filters > Does Not Contain. Enter:

(Had you been seeking Obama Romney as a contiguous text entry – highly unlikely, in this case – you would have entered both words in the first field.) Click OK, and you should view a small number of records remaining onscreen, their row headers colored blue. Select all the row headers, right-click anywhere among the selection, and click Delete Row. Then turn the Filter button off, and to your relief all the other records – the ones that do contain the terms Obama and Romney – will reappear. Your data will have thereby been cleaned. It’s an irritant, but a 30-second one.

The Data

In the interests of continuity I’ve supplied another workbook built on the big data key-term search, but these data were garnered today, Much of the preliminary work, including the data sweep described above, has already been executed. You can get the workbook here:

Now let’s look at some – but only some – of the things you can do with the data.

Tweets per Hour: Calculating Velocity

Once you’ve dropped that passel of tweets onto a worksheet and vetted its data character, you might want to speed-gun the results, that is, count the tweets on the worksheet and divide the sum by the length of time they took to gain a foothold on Twitter’s servers — a simple measure of topic popularity. Working with the data as they’re positioned on Bigdata2, the relevant formula looks something like this:

=COUNT(C2:C1229)/((MAX(C2:C1229)-MIN(C2:C1229))*24)

What, then, is this formula doing? It’s trading on the understanding that dates are really numbers, and as such is determining the largest number – that is, the latest date/time – staking the column, and subtracting from it the smallest number – that is, the earliest date/time. That difference is what’s divided into the tweet count. (Note: you needn’t bind yourself to the precise 1229 last-row value when penning the formulas; you can enter 5000, for example, because Excel will ignore any blank cells here.)

But what about that 24? This curious addendum to the formula acts upon the time segment of the date’s numeric equivalent. To explain: if October 18, 2012 is rendered numerically as 41200 (the number of days distancing it from the baseline January 1, 1900), then 41200.5 stands for noon on that date – the .5 standing for half of the elapsed day. A decimal of .25, then, represents 6:00am – or the passage of one-quarter of the day. And if you multiply .25 by 24, you get 6 – 6 hours that is. And in turn if you subtract the earliest recorded date here from the latest, you get .471794; multiply that number by 24 and you get 11.32 hours – and that’s the number you’d divide into the number of tweets. (Just remember that 6.2 hours isn’t 6:20am; it’s 6:12, because .2 of an hour is a decimally-rendered 12 minutes).

Thus our data drive a per-hour tweet average of 209.0973 (yes, you may want to scale down some decimal points).

Tallying RTs

Another metric of apparent interest out there is retweet frequency, a calculation to which our data are most amenable. (www.retweetrank.com lists the top 10 tweeters whose messages are retweeted; when I last looked Marshall Mathers/AKA Eminem topped the league leaders. He has over 12,000,000 followers, and follows none. You can draw your own conclusions about the zeitgeist.)

Title the G column RTs, click into G2, and enter:

=IF(LEFT(B2,2)=”RT”,1,0)

(The “RT” isn’t case-sensitive, by the way.)

The formula responds to one of two contingencies: If the tweet’s first two characters are RT, a 1 will register in the relevant cell. If the RT condition isn’t met, a 0 is supplied instead. Copy the formula down the column, and in a blank cell of your choosing that’s out of harm’s way, say J1, enter:

=SUM(G2:G1229)/COUNT(B2:B1229)

What’s we’ve done here is simply total the 1s descending the G column and divide that sum by the number of tweets. I get .276059, which presents itself as 27.61% in percent-format taken to two decimals.

A Measure of Content

Once your tweet download is in place you might well want to prospect the data for key words or terms likely to predominate, or trend, across the tweets. Since the tweets circumscribe a finitely-drawn time span, this sort of evidence could be of more than a little journalistic utility.

Here’s what you can do to capture trending terms: Enter the term for which you’re currently searching, say in K1. I’ve entered the word “security” (without the quotes), but it could be anything, and the entry is always changeable. Next, title the H column TrendingTerm or something comparable and enter in H2:

=IF(ISERR(SEARCH(K$1,B2)),0,1)

To explain: this formula owes its efficacy to the SEARCH function, which inspects a cell (here B2) for a search term (the first element or argument, in this case the contents of K1). If found, the starting position of the term is returned. If the item isn’t there, the #VALUE error message commands the cell. Thus if I write

I like brownies.

in cell B16 and brownies in A19,

=SEARCH(A19,B16)

Will yield 8, the character position at which “brownies” begins (you can also enter “brownies” in the formula, in lieu of A19). I should add that SEARCH and FIND are near-twins; the difference is that SEARCH exhibits case-insensitivity

Thus our formula in H2 issues an IF statement: if the searched-for term in cell in K1 doesn’t appear in H2 and spurs an error message as a result, a 0 alights in H2. If the term is found and thus yields a starting position number, H2 receives a 1 instead.

Copy the formula down the H column (remember, you need that absolute reference dollar sign to the immediate left of the 1 in K$1). Again as with our G-column activity, a quaintly binary stream of 1s and 0s plunge down the column. Next, say in K2, enter:

=SUM(H2:H1229)/COUNTA(B2:B1229)

The formula is identical in spirit to our RT frequency calculation: it adds the H column’s values and divides the result by the number of all tweets. For “security” I get a 10.67% incidence rate, denoting the percent of all collected tweets in which “security” appears. If I replace “security” with say, “cloud”, I get 8.71%. You can obviously enter any term, though.

And once you nail that understanding down there’s no reason why you can add additional trend-search columns in I,J,K, etc., calling upon the above formulas (with appropriate cell addressing, of course). You can enter the terms you’re searching in K1, L1, M1, etc., and have the respective columns search for each. Of course “big data” has merely served as an example; this whole exercise can be applied to more provocative tweet searches.

And of Course, Some Pivot Tabling

Of course. Once the data are properly groomed a battery of possible pivot tables awaits. For example, click anywhere in the data and order up a pivot table. For starters you can

drag Date into the Row Labels area.

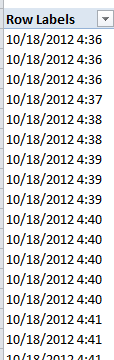

To start, you’ll behold something like this:

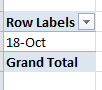

You’ll doubtless want to group these data into day and/or hour and/or minute units. Click anywhere among the dates and Click PivotTable Tools > Options > Group Selection in the Group Button group. You should find the Months parameter selected. Click it off and click Days on. You should see:

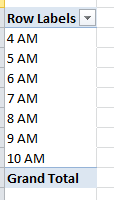

Not very differentiating, is it? That result tells you that all the collected tweets with which we’re working were composed today, bidding us to fine-grain the data. Click on the 18-Oct (or however your date is formatted), return to the Group Selection window and click Days off, and Hours on. You should see:

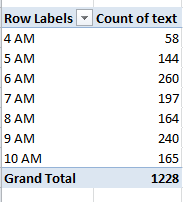

That’s probably more like it. Then drag Text into the Values area. You should see:

Now that’s a touch more interesting, again hitched to the need to understand the operative time zones (see Part 1).

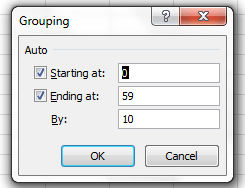

Now for finer granularity still, drag the Minutes field beneath Date in the Row Label area. Click anywhere among the Minute data and revisit the Group Selection window. This time you should see:

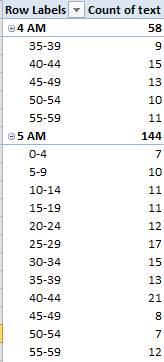

For the sake of variety, replace the 10 in By: with 5. You should see (the screen shot excerpts the whole):

You get the point. Here we see tweet frequencies distributed across 5-minute intervals.

Allow me emphasize that the data run-throughs I’ve recounted here represent only some of the analytical work you can pursue, and only some of the ways in which that work can be realized. There is assuredly more you can do; hope you’re inspired to do and learn more. As a matter of fact, it sounds like the stuff of which all-day workshops are made. Interested? Then give my agent a call.

I just realized – I don’t have an agent.

Leave a comment