The reported levelling of the rates of childhood obesity in the UK has naturally been accounted a good thing (for the article that made the news in full, consult this URL), although for dieters who like their glass half empty the news should perhaps be imbibed with slow, deliberate slips. After all, the study’s abstract prefigures its findings with this bit of curbed enthusiasm:

“More than a third of UK children are overweight or obese, but the prevalence of overweight and obesity may have stabilized between 2004 and 2013.”

It’s possible, then, that the discovered equilibration has more to do with some saturation effect that has already visited its full-on impact upon the cohort of obesity-vulnerable youngsters, but I take no credit for the worthiness of that conjecture, only blame for the conjecture itself.

Be that as it may, the National Child Measurement Programme (NCMP) has something to say about the development as well, here for the years 2007/8 through2012/13, in the form of this workbook available for download here:

http://www.noo.org.uk/visualisation

(Click the Download link for Child Obesity and excess weight prevalence by Clinical Commissioning Group.)

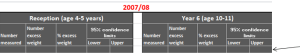

The book breaks out its populations by both “excess weight” and “obesity” measures (the former standing as a superset of the two) for two school-year age cohorts, what the UK calls Reception (ages 4-5) and Year 6 (10-11) (look here for the BMI formulas, both for kilograms and pounds; the workbook’s NOTES tab sketches additional methodological background. This study’s sample sizes also operate within the confidence interval limits limned in the Excess weight and Obese tabs. Remember again that our spreadsheet doesn’t invoke the same data compiled in the journal piece. Remember too to review the Notes tab’s explication of data cells marked “s”).

Now to those data, about which there is a good deal to say. Or orrection: now to the data organization. First, and perhaps most prominent, are the fields. We’ve seen this before, and the question begs reiteration: why are data which, for all analytical intents and purposes, belong to the same parameter – e.g. Reception – stationed in a plurality of columns? The resulting barrier to analysis is formidable, though not insurmountable; but surmounting requires us to cut and paste kindred data to the same field, copy and properly align the CCG (Clinical Commissioning Group) identifiers down columns A and B via a duly-diligent copy and paste, and make room for a year field in which that differentiating datum needs to be copied down, too. And you’l probablyl need to cut-and-paste the Year 6 data, too, and presumably to a worksheet all its own the better to disentangle these from the Reception figure, though that larger positional issue needs to be thought through – because in fact the workbook draws itself around two pairs of variables – the Reception/Year 6 axis, and the Excess weight/Obese binary. (It could at the same time be possible to jam all of these into a single grand sheet, by introducing, say, new School Year and Weight Category fields, thus lending themselves to additional breakouts along those lines, but I’m holding my jury out on this unifying tack.) The larger point, though: if you’re serious about doing something serious with the data, you’ll need to think about all these prior somethings, too before your analysis proceeds.

But quite apart from those necessary considerations you’ll also have to do something about the superabundance of merged cell in rows 1 through 4. These can’t coexist with your pivot-tabling intentions, and neither can the blank row

That prohibitively separates those potential field headers from the data. The simplest rejoinder, of course: move the putative field names above down a row, but only after you’ve made sure that the now-unwelcome text entries still higher above these perch at least one blank row away from what you’re envisioning as the finalized data set (and if you’re going this far you’ll find that, once unmerged, the cell now invested with the “Number measured” header, for example, has been bumped two rows above its eventual place atop the dataset). And, for crowningly good measure, you’ll likewise have to unmerge the sheet’s title wrapped across the A1 supercell. (I also don’t see the immediate pertinence to our data of the 32,000 LSOA-CCG lookup records in that so-named tab, apart from the “The analysis uses a best-fit 2001 LSOA to 2013 CCG lookup created by PHE” declaration in the Notes tab. LSOA abbreviates lower layer super output area; for more, look here.)

To repeat – if you’re not prepared to do the reconstitutive work schematized above I don’t think you’ll be able to take the data very far; and why the analysts and/or story-seekers should be made to steel themselves for a rough ride across this obstacle course makes for one very good rhetorical question, though of course we’ve asked it before.

In fact, though, the data look pretty interesting, if now untamed. Your mission – should you choose to accept it – is to domesticize it all.

But don’t worry – the workbook won’t self-destruct.

Leave a comment